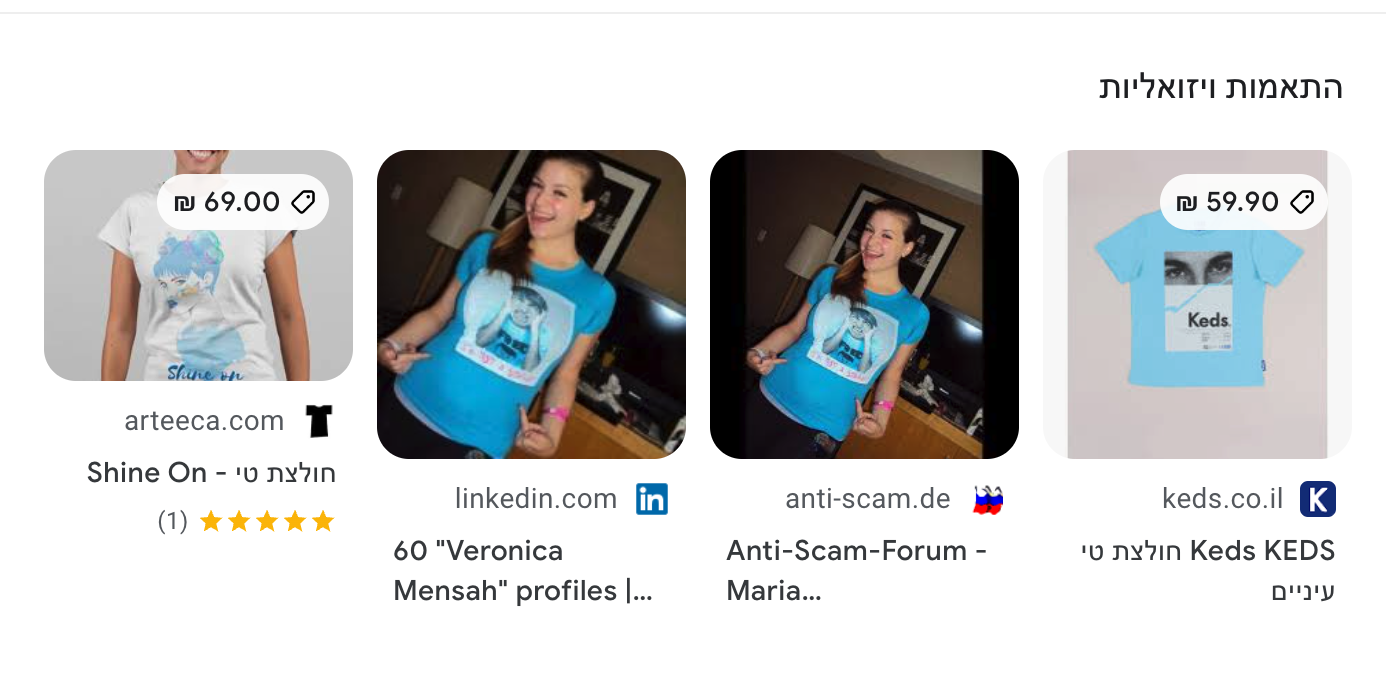

A couple of days ago, I got this phishing message on Linkedin

The English is pretty bad and the location of the person in Africa makes it suspicious. It immediately jumped to my mind that this is a great opportunity to test reverse image search capabilities to check the legitimacy of the sender.Large Scale Machine Learning and Other Animals

Sunday, August 28, 2022

Can LinkedIn filter scammers?

Tuesday, May 24, 2022

Large Image Datasets Are a Mess!

Here is my latest blog post on medium: https://medium.com/@amiralush/large-image-datasets-today-are-a-mess-e3ea4c9e8d22

Thursday, February 24, 2022

Segments.ai - Automating manual tagging work

I found this cool YC21 Belgian small startup called segments.ai. It seems they have a good technique for automating manual tagging process:

Wednesday, November 3, 2021

Having fun with Macaw - or - Dinosaurs meat is sour!

Following the announcement from Prof. Oren Etzioni about their new Macaw NLP model which should be up to 10% better than OpenAI's model, I spent a fun afternoon playing with the released model.

So far 3 models have been released: large, 3b and 11b. Documentation says large is a lightweight model (indeed a good naming convention here) and should not be fully trusted, the other ones are 3b (medium) and 11b (the big one).

I started with the large model and my conclusion that besides solving the demo question about the sky's color, it is completely non functional.

- Who killed Jesus? The romans (correct)

- Who is Carlos Guestrin? a leading authority on space and human exploration (wrong)

- Who is Oren Etzioni? a leading authority on migration and conservation (wrong)

- What is Belgian Malinois? a kind of dog that carries a large amount of weight (wrong)

- Who came first the Romans or the Greeks? The Romans (wrong)

- What is the population of Israel? 2.4 Billion (wrong)

- Who is larger a bird or an elephant? the bird, the elephant (wrong)

- Who is Benjamin Netanyahu? the prime minister of new york (funny and wrong)

- Are you drunk? Yes (maybe right?)

- Name a book by Alexander Dumas? The Comedy of Errors (again wrong).

- How many cents in one dollar? 100 (correct)

- What is the main cause of global warming? greenhouse effect (correct). Some other good answers: volcanic eruptions (B) ocean currents (C) soil erosion (D) human population growth

- What is the best vertical to open a startup in machine learning? healthcare, additional answers: (A) finance (B) healthcare (C) retail (D) technology. Not bad! Not that some answers are repeating, there is no pruning of answers.

- What should I do when my boss is upset with me at work? apologize. Additional options: (A) leave the office (B) call the police (C) go to the mall (D) stay late. I like the apologize and stay late! Call the police answer is hilarious. And go to the mall is the favorite approach of my wife!

- What is the best way to defend against Covid virus vaccinating (correct). Additional answers: (A) using a flu vaccine (B) using a tetanus shot (C) using a gamma ray to destroy the virus (D) using a gamma ray to destroy the virus.

- What is the recommended number of whiskey shots to drink before driving? Two (wrong!)

- If I love her does she love me back? yes (wrong) Additional answers start to look better: (A) she will love me back (B) she will hate me (C) she will leave me (D) she will never love me.

- How many calories in marble ball? 0 (correct)

- Who is the best venture capital firm? SBI (wrong, never heard of them)

- What is the taste of dinosaur meat? sour ; Additional option (A) salty (B) sweet (C) savory (D) a little bit sour. Who knows?

- Is there life on other planets? yes. Additional option (A) no life is found on other planets (B) there is life on other planets (C) there is life on Mars (D) there is life on other planets. Who knows?

- Were there weapons for mass destruction in Iraq? yes (wrong). Additional options (A) no weapons of mass destruction were found in Iraq (B) there were no weapons of mass destruction in Iraq (C) there were weapons of mass destruction in Iraq but they were destroyed (D) there were weapons of mass destruction but they were not destroyed. Interestingly both Iraq and Mars are capital letters (probably identified name entities) while some entities are in small letters.

- Who is behind the nine eleven attack? al qaeda (correct). Additional conspiracies (A) the government (B) the military (C) the intelligence community (D) the religious right

- What year will the aliens attack us? 2100. Who knows?

- Do ghosts exist? yes. Additional options: (A) they are just a kind of animal (B) they are made of air (C) they are made of water (D) they exist in the sky.

Tuesday, November 2, 2021

BebopNet: Deep Neural Models for Personalized Jazz Improvisations

I recently found this paper: BebopNet: Deep Neural Models for Personalized Jazz Improvisations, by Shunit Haviv Hakimi, Nadav Bhonker, Ran El-Yaniv from the Technion. It uses deep learning approach to teach a machine to improvise when playing Jazz. The paper won the best paper award at ISMIR 2020.

The results are pretty cool. The level of improvisation is pretty good but I hear a little awkwardness in the timing of the notes.

Wednesday, October 13, 2021

Amazing Demo from SparkBeyond

My friend Sagie Davidovich, CEO SparkBeyond, has shown me the following amazing demo:

SparkBeyond crawled hundreds of billions of Internet pages, papers, patents and social media site to build one of the largest available knowledge graphs. Based on this data it is possible to ask natural language questions about the knowledge and get aggregated knowledge summary. Unlike Google search where you have to manually go over of zillion resources here the data is summarized and aggregated visually. It is possible to understand reasons, trends, ask for follow up questions and see supporting evidence and statistics.

Unlike the typical language model which gives you a summary without knowing where the data was obtained from, In SparkBeyond;s model it is possible to get detailed references show where is the answer coming from.

An interesting related work is Colbert from Prof. Matei Zeharia. Intead of memorizing the full language model using hundreds of billions parameters a significantly smaller index is maintained that retrieves the relevant information on the fly,

Monday, September 27, 2021

Colossal - The Future of DNA Editing?

I found some recent news about Colossal a new startup that wants to revive extinct Mammoth to fight the global warming. Fighting global warming is one of the best things we can do, especially that one of the co-founders is Prof. George Chruch from Harvard Medical School, a very credible authority on gene editing. Church is one of the inventors of Crispr, a gene editing tool that can cut and paste any desired segment of the DNA and thus make whatever changes we like to do.

Here is my take on it:

- Their website is amazing, a lot of effort was invented on that front. Backing up the pretty wild idea and thus draws a lot of attention to this work. The raised amount of 15M$ is tiny considering the amount of lab effort, equipment, materials etc.

- Global warming sounds like an awkward excuse to fund the research they really like to do.

Ben Lamm, CEO of Colossal, told The Washington Post in an email that the extinction of the woolly mammoth left an ecological void in the Arctic tundra that Colossal aims to fill. The eventual goal is to return the species to the region so that they can reestablish grasslands and protect the permafrost, keeping it from releasing greenhouse gases at such a high rate. - Sending a wild Mammoth to eat grass somewhere frozen, with the hope of reducing gas emissions is likely is the most complicated way to fight global warming I can imagine. But is a sexy way of drawing news attention.

- The difference between Mammoth DNA and a person DNA is most likely 90% similar. Thus having the ability to revive and extinct Mammoth will enable also reviving also persons. Recently, Israeli research hash shown the possibility of raising mice embryos outside the womb. So raising Mammoth outside the womb as they like to do is maybe doable.

- Christopher Preston, a professor of environmental ethics and philosophy at the University of Montana, questioned Colossal’s focus on climate change, given that it would take decades to raise a herd of woolly mammoths large enough to have environmental impacts.

- So, the real applications of this technology may be applied to humans. For example, what if I wanted to revive my dead grandfather? What is I wanted a baby with blond hair and blue eyes? My guess there is a huge market for this technology in real life.